AI-Powered Search Layer for Your Media Archive

Your video archive holds thousands of hours of valuable content—but finding the right clip shouldn’t take hours. MediaCopilot adds an intelligent AI search layer on top of your existing MAM, making every word spoken, every scene, and every face instantly searchable.

Enhancing your archive with AI search is non-disruptive and straightforward.

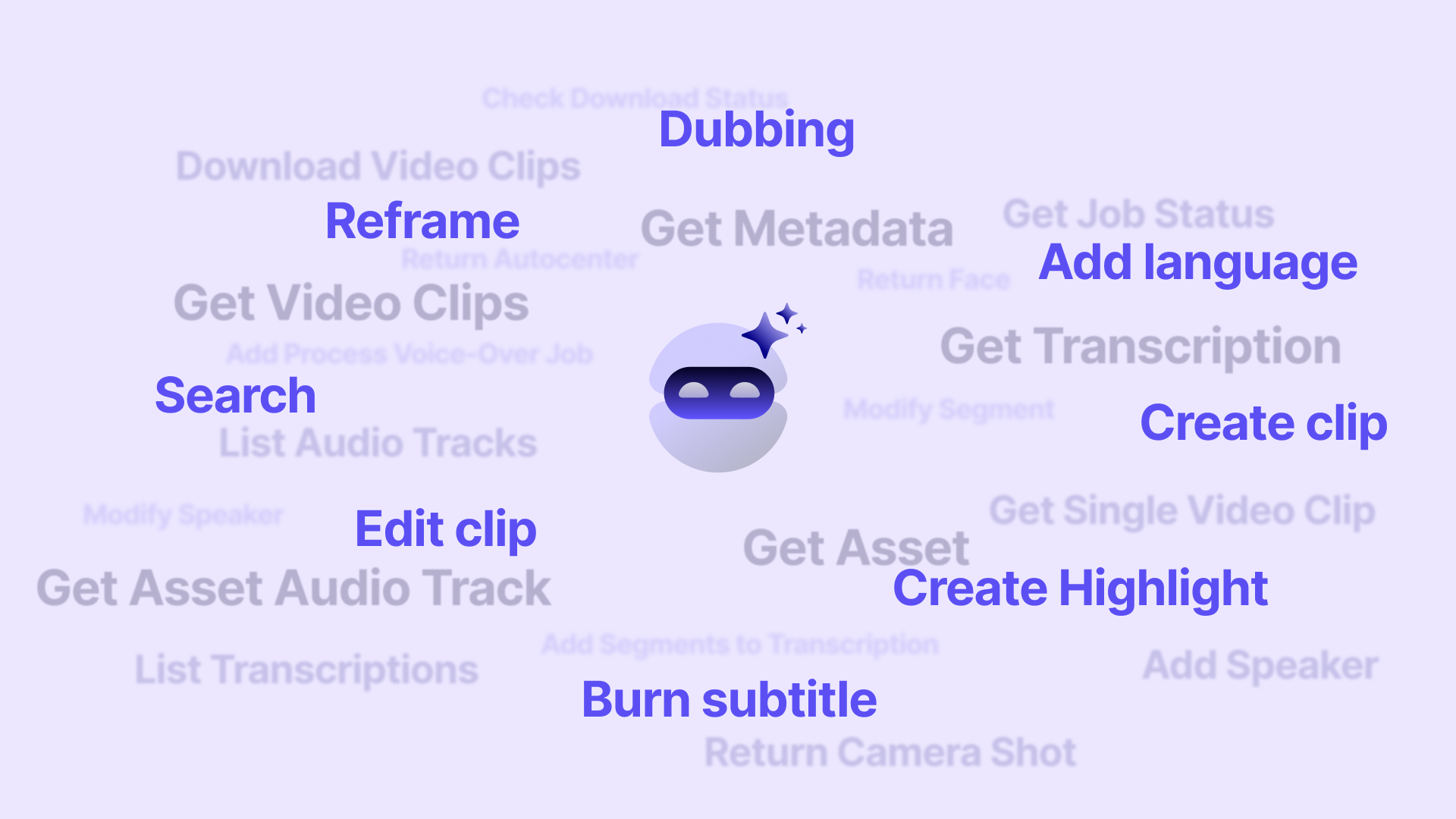

Key Capabilities

Everything you need to search and unlock your media archive.

Find any moment by searching for words spoken in your videos. AI transcription makes every word in your archive searchable.

Search by what appears on screen—objects, locations, actions, and visual elements detected automatically by AI.

Find all appearances of specific people across your entire archive with automatic face detection and speaker identification.

Search using everyday language like ‘goal celebration in Champions League 2024’ instead of rigid keyword matching.

AI generates rich metadata, tags, and descriptions for every asset, filling gaps in your existing catalog data.

Connect MediaCopilot to your existing MAM, storage, or cloud infrastructure without disrupting current workflows.

How to Add AI Search to Your Media Archive

MediaCopilot integrates with your current infrastructure—no migration needed. Your content stays where it is.

The platform automatically transcribes, analyzes, and indexes your archive, enriching each asset with searchable metadata.

Your team can instantly find any moment using speech, visual, or semantic queries—across your entire catalog.

Jump directly to the exact frame, clip the segment, or export it—all from the search results interface.

Why Teams Choose MediaCopilot

Who It’s For

Make decades of broadcast content instantly searchable for news research, anniversary programming, and content repurposing.

Give editors and producers instant access to b-roll, interview footage, and archival content without waiting for librarian support.

Find relevant archival footage for developing stories in seconds, dramatically speeding up news production workflows.

Instantly locate specific plays, celebrations, interviews, and press conferences across seasons of footage.